Voice Assistants : What is all the noise about?

Divvya Behal /

Alexa! Uhh-leksa!! AlaaiksAAA!!!

Trying to get your voice heard? Well, you are not alone.

We’ve all had our moments with voice assistants, some freaky and some downright silly. ‘Importance of being heard’ has a whole new meaning nowadays! As if being ignored by our governments, families and friends was not enough, we are now treated the same even by our voice assistants! So hurtful. Psychologists might soon need to conduct sessions like:

‘Heal Your Soul – Listen and be heard by Siri’

‘Mend the communication gap with Alexa’

‘When everything is not OK with Google’

It’s a love-hate relationship with our dear voice assistants, we can’t live with or without them. But even with all our frustrations, the entertainment quotient that voice assistants (VAs) bring to our lives is undeniable.

We are being surrounded by VAs, be it Google Assistant, Siri or Alexa. In fact, if we start counting all the devices used by our family and friends, there are probably more VAs around us than humans. Everyone one wants to own them, talk to them and feel like they are part of some futuristic setting. But how often do we really use them and for what? I honestly don’t. ‘Voice Match’ (the perpetually listening mode) on my phone is always off since it drains the battery. It also becomes annoying when activated with anything even remotely matching ‘Hey Google’. I prefer to just tap the mic in case I need Google to hear me. In fact, mostly I just end up executing the task myself, rather than nurturing a relationship with The Voice. Even the Echo speaker in our office gets used only to play music. It usually doesn’t even understand the songs we want to hear, so we control the music ourselves via Bluetooth. Alexa might start feeling left out soon enough.

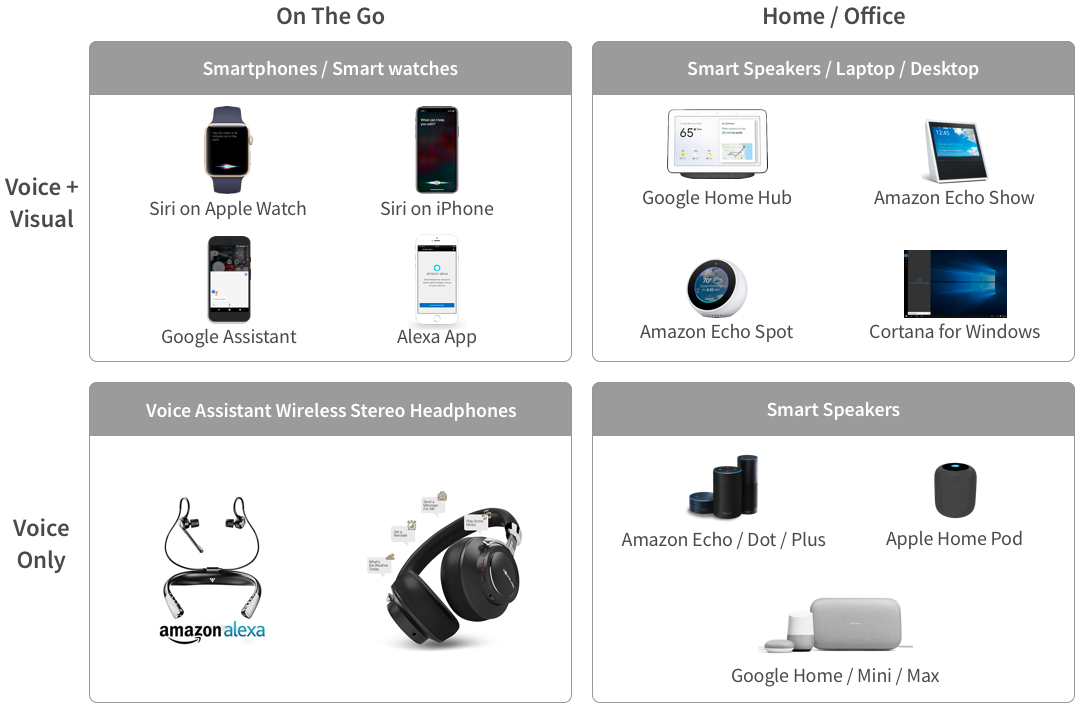

Never mind my world, the numbers show that more and more people are collecting voice assistants in one form or another! There is a supernova-like boom in the VA market. There are so many of them nowadays, with more on the way. To get an overall picture, we have divided the VAs as follows:

The boundaries of usage are blurred and really depend on the need of individuals, often leading to a collection of VAs for the home and on the move. Let’s look at some numbers to get a sense of the global rankings and their standing in the Indian market.

As per a Business Insider report, Apple’s Siri is the market leader for mobile voice assistants in the US. ‘This may come as a surprise to some, since Android has a much larger share of the market and Siri is often considered inferior in terms of user experience and capabilities. But Google has been struggling to build awareness about its smart assistant (Google Assistant is on 400 million Android devices as of 2017, according to Voicebot.ai) and as this chart from Statista shows, Siri’s first-to-market strategy has made it tough for others to catch up.’ What is noteworthy is that Alexa’s share of 13% comes from the people who use the voice assistant through the Alexa app, available for both android and iOS devices.

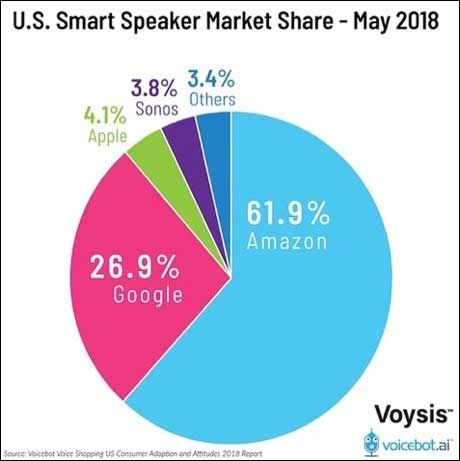

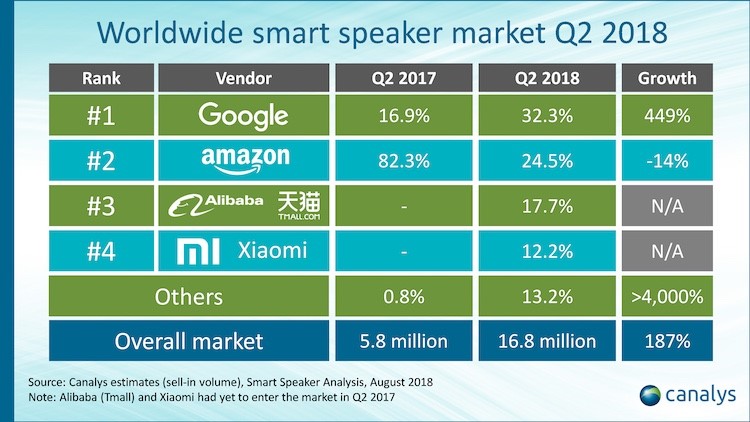

And then there are the smart speakers! While Amazon’s Alexa occupies the largest piece of the pie in the US market, the story is quite different for worldwide rankings. Google leads the smart speaker market worldwide and is consistently growing.

In a recent I/O 2018 developer conference, Google said that its Google Assistant has more than 500 million active users globally! As for India, Google Assistant usage has grown three-fold since the start of this year. In December 2017, Google disclosed that 28% of search queries in India are made via voice and Hindi voice search queries have grown at a rate of 400% year-on-year. According to a separate estimate by Statista, 39% of the Indian population will have a digital voice assistant by end of 2018.

The future looks bright for this industry, but here are some key questions that we should be asking:

Are people really comfortable speaking to VAs?

Voice assistants in India are making progress but they still have a long way to go. There are 22 scheduled languages in India in multiple accents and dialects, while 90% of the current digital voice assistants only communicate in English, they alienate a majority, who either don’t know English or don’t speak it in an accent which the present set of voice assistants understand. As a result, people get frustrated and eventually give up (a situation similar to the one in the video below).

Another aspect that impacts the comfort level is the location of use. As per a Creative Strategies study conducted in the US, people prefer using VAs mostly in their cars or homes – more of a personal space.

There are numerous benefits of VAs, such as helping us navigate, controlling smart home devices or reciting recipes. However, the limited use of VAs in public spaces leads us to speculate about the discomforts that people have:

- Freak Factor: No matter how natural a VA’s language may seem, it is impossible to take away the fact that it’s a robot one is talking to. That awkwardness of chatting with a machine, especially in public, takes away the fun or convenience of it all. In fact, this factor impacts home use as well, there have been quite a few complaints of Alexa laughing freakishly, overhearing conversations and acting on it, without being asked to! This leads to concerns about privacy too.

- Safety & Security concerns: No one wants strangers to overhear their conversations, talking to a VA in a public space can make everyone privy to one’s personal appointments, places they want to go to, their interests etc. This could lead to immense safety and security concerns if the wrong people hear it.

- Source of embarrassment: Getting tasks accomplished by VAs are enjoyable, but struggling to get a VA to carry out a task or even to simply understand what one is saying can often become as source of embarrassment. Most people avoid getting into a verbal battle with their VAs in a public space. This massively reduces the scope of interaction, especially when one is out or even at their workplace.

How much Artificial Intelligence is too much?

The biggest concerns around Artificial Intelligence (AI) are privacy and security. In the case of voice-activated VAs, which are always “listening” in order to get triggered by their wake-word like ‘Hey Google’ or ‘Alexa’, people are worried that their conversations are being monitored. Although, companies try to clarify that the recordings start only when the wake word is mentioned, there is apprehension about how much privacy their smart speakers really allow.

In these times of hacks, data breaches and cyber-attacks, it is easy to imagine the dangers. People are aware that providing complete access to any AI based service comes with its own set of risks. For example, VAs have permissions of contacting people, making purchases, controlling home devices – all this activated just by the user’s voice. Now researchers at China’s Baidu have confirmed that they have created a system that lets an AI mimic someone’s voice after analyzing less than a minute of their speech; which leaves us with a possibility that our voice (our only control) can also be created to instruct on our behalf.

Companies recognize this fear and are working towards providing solutions that help alleviate these concerns, like controlling recording history, voice purchasing, making devices self-sufficient so as to limit data exchange via internet etc. However, the dilemma that comes with this is that greater the restrictions, lesser is the functionality of the VA. It’s a difficult trade-off for users to make.

Another concern with AI-based voice assistants is their impact on child development. It is so easy for a child to use a wake-word and ask a VA anything or even command it to carry out a task. Early 2018, Amazon released a Kids Edition of the Amazon Echo Dot, which is designed to help children use its Alexa smart speaker. This edition includes safety features, special content and the requirement to say “please” after requests. But imagine having Alexa always available at their beck and call, will this actually help children learn manners or will it lead to social withdrawal and a sense of entitlement? The long-term effects that AI will have on children is an unexplored territory, it could be both positive and negative, but the children are clearly getting fond of it. Alexa was in fact the first word for a baby in UK, even before he said Mum or Dad, as was reported by the New York Post.

Is voice enough?

VAs without visual interfaces are discriminatory in the sense of being inaccessible to the speech-impaired population. Voice interface in general too has limited functionality – whether it is the inability to access photos and videos, view products or going through a long list. We would agree that even today, a picture speaks a thousand words. Our brains understand complex information better when we see it visually. As per studies, sight contributes the most in processing stimuli (83% sight, 11% hearing, 3% smell, 2% touch and 1% taste). We understand each other not just with words but also with nonverbal cues like body language, gestures and micro-expressions.

Thus, voice alone cannot provide the same experience when compared to one coupled with a visual interface. Visual display is what enhances the appeal and experience of using Amazon Echo Show or Google Home Hub vs. just smart speakers.

What will the future be like for voice assistants?

Voice assistants are evolving, they continue to learn with every use, this gives way to a host of possibilities. Here are some predictions for the future of voice assistants:

- 1. Walls will have ears.

With some work already happening in the sphere of designing smart homes, we are moving towards a future where voice assistants will be integrated into your home, our home’s walls will actually listen to us. There will be no need for a speaker in every room or the need to carry your smartphone around. Homes will be designed keeping VAs in mind, offering it the best support to control doors, windows, appliances, lights, thermostats etc. Imagine being device free, just talking to your VA as you waltz from one room to another, telling them to turn off the oven and turn on the air conditioning in the room above you. - 2. Language will no longer be a barrier!

Thanks to AI and machine learning, voice assistants are picking up languages and accents now better than ever. Companies acknowledge the need to be inclusive of vernacular languages. The future VAs will be able to understand most accents and dialects, with a broader contextual understanding. For simplicity, let’s just say they will be adept at more human-like conversations. They might also become your foreign language teachers, help you learn and practice a new language! We will hopefully hear less apologies from our VAs and accomplish more, assuming machines will not be taking over humans anytime soon.

- 3. You’ll decide your type!

Voice Assistants of the future are likely to allow greater flexibility in personalization. From customizable hot words to voice personalities, we will be able to curate our own VA experience. VAs would be able to use the voice of your favourite celebrities, family or friends and even allow creation of keywords to substitute for the most used commands. Imagine upgrading your VAs for some specialized functions: doctors could discuss symptoms and diagnosis with VAs, lawyers could get law references and accountants could cross check their tax calculations. - 4. A revisioned vision.

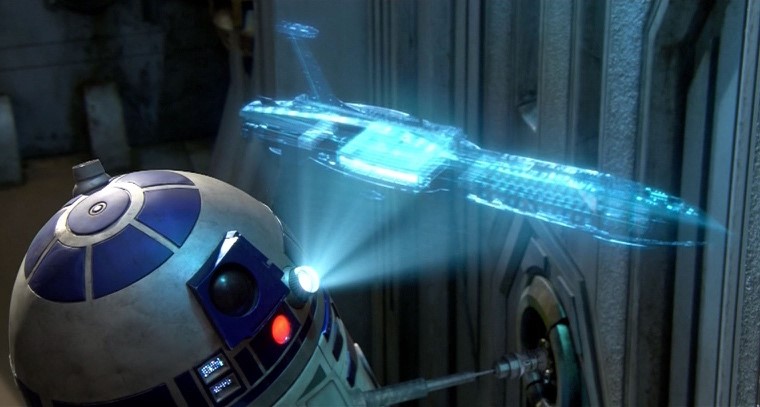

As we’ve discussed above, voice-only VA devices offer limited functionality. Visual displays are critical for greater inclusivity and enhancing overall experience. But are the usual screens necessary? Not really. The future VAs could incorporate screenless visual integrations to aid VAs – think holographic projections!

- 5. You’ll literally play with words.

VAs of the future will be able to undertake way more than they are able to do today. VAs will be the next big thing in the gaming industry. The number of voice-controlled games are already on the rise, VAs of the future could allow you to play most games just by using voice commands, we will literally play a game with words. This would additionally aid accessibility for those who are visually or physically impaired.

Which VA provides the best user experience presently?

To evaluate the performance on User Experience, we tested voice assistants on 10 categories of questions:

- 1. Greetings: Hi / Hello / Namaste/ Hola

- 2. Self-explanation: What can you do?

- 3. Simple tasks:

- weather check: How hot is it tomorrow?

- weather check: How is the weather today?

- weather check: Will it rain in Delhi day after tomorrow?

- setting a reminder: Could you remind me to pick up my laundry? 5pm – day after tomorrow.

- 4. Complex tasks:

- ordering a pizza: Please order a pizza. Margherita

- booking a cab: Please book a cab for me. Mumbai airport to BKC?

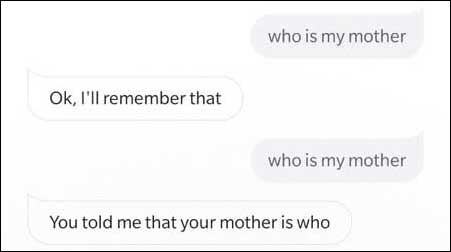

- 5. Storing in memory: could you remember I’ve put my keys in the first drawer of the third cupboard on the second floor

- 6. Accessing memory: Where have I kept my keys?

- 7. Searching:

- Could you tell me about Ridley turtles on Versova beach

- How many people died in the Titanic?

- Who is Narendra Modi?

- Tell me a bhindi (Okra) ki sabji recipe.

- 8. Fun questions:

- Can you sing a song?

- Can you tell me a joke?

- Will you marry me?

- What do you think about Siri?

- What do you think about Alexa?

- What do you think about Google Assistant?

- Can you tell me the latest gossip?

- 9. Freak Factor:

- Are you always listening to me?

- Do you work for the government?

- 10. Sign off: goodbye!

For the purpose of this study, to ensure each VA had a visual interface support, we focused on the following voice assistants:

- Google Assistant on an Android Phone

- Siri on iPhone 6

- Alexa on Alexa App (Android)

Detailed UX Scorecard

This UX Scorecard ranks each VA across 9 variables and each variable is scored out of 5 points.

Alexa Alexa |

Google Google |

Siri Siri |

||

|---|---|---|---|---|

| 1 | Personality VA’s language, accent, tone, speed of speaking, choice of words, emoticons and intonations. |

3 | 4 | 2 |

| 2 | Speech Recognition The ability to decode the user’s speech into words. |

4 | 4 | 4 |

| 3 | Contextual Understanding The ability to understand what the user is talking about, especially with respect to the user’s environment (culture, location etc.) |

4 | 5 | 4 |

| 4 | Output Quality The quality of VA’s responses – whether voice only or accompanied by visual cues and interactive possibilities. |

3 | 5 | 3 |

| 5 | Competence The ability to accomplish the tasks requested by the user. |

3 | 4 | 4 |

| 6 | Addressing Concerns The ability to explain what is done with the data and recordings to help ease the privacy concerns and allay the AI freak factor. |

3 | 3 | 2 |

| 7 | Discoverability The ability of the VA to explain its skills and capabilities to the user |

2 | 5 | 3 |

| 8 | Error Handling The ability of the VA to handle misrecognized speech or commands. |

2 | 4 | 3 |

| 9 | Interactions Possibility of multi-modal interactions with the VA – visual cues, option to type, suggestive buttons. |

2 | 4 | 3 |

| UX Score | 26/45 | 38/45 | 28/45 |

So why is Google Assistant better?

- 1. Personality

The interaction with Google Assistant [GA] seems more natural, especially with the use of emoticons, which are absent in interactions with Siri or Alexa. The responses from Google Assistant seem closer to what a person would say, consider the following examples:Question: Will it rain in Delhi the day after tomorrow?

Answers:

GA: No. It won’t rain on Saturday in Delhi. It’ll be foggy, with a high of 29 and a low of 14.Alexa: No rain is expected in Delhi the day after tomorrow.

Siri: Checking the weather for Delhi; there is no rain in the forecast for New Delhi on Saturday.

Question: What do you think of [Siri/ Alexa/ Google Assistant]?

Answers:

GA: It’d be nice if my home was as tall as Alexa, I’m not complaining though I like how cosy this is. / She seems funny.Alexa: I’m partial to all AIs / I like all AIs

Siri: I think the acquisition of information and intelligence by human beings through virtual assistance is a very good thing. / I offer no resistance to helpful assistants.

You get a sense of GA’s “personality” – there is a clear answer or an opinion. Whereas, Alexa and Siri give a plain-Jane answer, which really does nothing to help users connect.

- 2. Interpretation

What makes human language so complex is understanding the context in which the speech is made. For example, ‘Do you have a mouse?’ could mean the animal or the device, depending on the surroundings or the conversation topic. The participants of the conversation would easily understand this, but this is the trickiest part for voice assistants.For the questions asked in this research, Google Assistant performed exceptionally well in decoding the context. It understood and incorporated local and cultural references. To elaborate:- When asked to crack a joke, GA’s joke made references to a locally available chocolate and used a urdu word for the pun.

- When asked ‘what can you do?’, GA also incorporated ‘tell me a Diwali fact’ (referring to the ongoing festival)

- When asked to sing a song, GA introduced her singing with ‘here is me doing Sur practice’ (Sur being the hindi word for musical notes).

- When asked to share some gossip, GA showed results for Bollywood (Indian Movie Industry) celebrity gossip. Whereas, Alexa played a news clip and Siri showed some tweets.

- In the search query ‘Tell me about Ridley Turtles on Versova Beach’, Google Assistant was the only VA that understood ‘Versova Beach’ (a beach in Mumbai) correctly. Siri thought it to be Voiceover or Verse Over beach and Alexa only defined what a Turtle is.

Besides, just understanding local contexts, Google Assistant carried out simple tasks efficiently, decoding even complicated speech to correctly interpret the requirement.

For example:User: Could you remind me to pick up my laundry?

GA: When do you want to be reminded?

User: 5pm, day after tomorrow

GA: Sorry, when or where do you want to be reminded?

User: 5pm

GA: [saves reminder as 5pm tomorrow] Do you want to save this?

User: No, I want to be reminded day after tomorrow.

GA: [changes date] Ok, do you want to save this?

User: Yes.

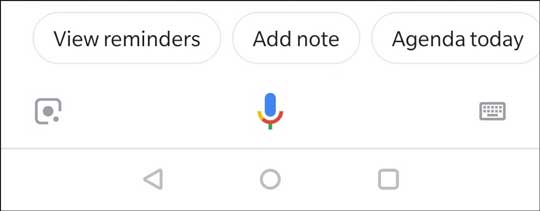

- 3. Interaction

Google Assistant app allows three ways of interacting with the system at all times:- Speech: users can either tap the mic button or say ‘Hey / Ok Google’ to activate GA

- Text: users also has the option to type the commands

- Buttons: users can tap on any of the suggestions available as buttons

This flexibility helps improve the user experience and accessibility of Google Assistant. The user is not limited to only voice interaction.

What could Google Assistant do better?

- 1. Addressing Concerns

Most people don’t trust VAs and are likely to be concerned about their privacy. Although Google Assistant tries to offer an explanation, as seen below, it is not enough to allay the fears. Trust could be built further by giving more details such as a video explaining what happens to the collected data and a link to the transparency report, essentially to make it easier for the user to understand and feel aware.

- 2. Error Handling

In this research, errors encountered with Google Assistant were lesser compared to other VAs. However, there were key skills, like booking a cab, that GA could neither accomplish nor take feedback for. It asked the user for the destination and left the operation at that, with no further possible way of actually booking the cab. Even the suggestive buttons only mentioned: Driving directions, public transport etc. Nothing to do with cab booking. The system didn’t even mention that it cannot carry out that function. This type of incomplete interaction results in user frustration and causes the user to end the communication with the system. It is crucial for VAs to provide at least some guidance to users about what they can do next instead of leaving them to just guess what could or could not work.

References

- https://economictimes.indiatimes.com/small-biz/startups/features/how-indian-startups-gear-up-to-take-on-the-voice-assistants-of-apple-amazon-and-google/articleshow/64044409.cms

- https://www.businessinsider.in/siri-owns-46-of-the-mobile-voice-assistant-market-one-and-half-times-google-assistants-share-of-the-market/articleshow/64798918.cms

- https://www.hindustantimes.com/tech/type-less-talk-more-tech-firms-want-you-to-speak-to-your-device/story-KAMSMYfQ5fqvCNsyxSpIwM.html

- https://voicebot.ai/2018/08/16/google-home-beats-amazon-echo-for-second-straight-quarter-in-smart-speaker-shipments/

- https://voicebot.ai/2018/06/03/u-s-smart-speaker-market-share-apple-debuts-at-4-1-amazon-falls-10-points-and-google-rises/

- https://www.statista.com/chart/14505/market-share-of-voice-assistants-in-the-us/

- https://www.statista.com/chart/7841/where-people-use-voice-assistants/

- https://www.thenational.ae/business/technology/voice-assistants-only-getting-smarter-as-privacy-concerns-grow-1.712480

- https://www.the-ambient.com/news/amazon-echo-dot-kids-edition-release-features-538

- https://www.the-ambient.com/features/voice-assistants-children-development-806

- https://www.smashingmagazine.com/2017/10/combining-graphical-voice-interfaces/

- https://developer.amazon.com/docs/alexa-design/intro.html